|

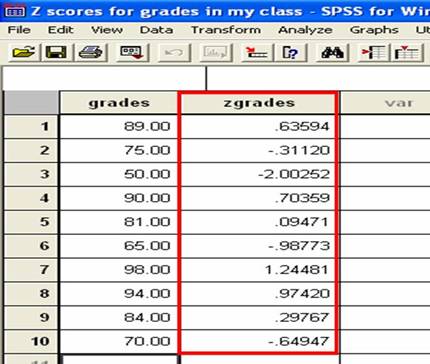

It would be interesting to understand the effect of this choice by doing more experiments and comparing the two options. The good LDA model will be trained over 50 iterations and the bad one for 1 iteration. 'Optimizing semantic coherence in topic models.' In Proceedings of the Conference on Empirical Methods in Natural Language Processing (pp. M., Talley, E., Leenders, M., & McCallum, A. However, in human word association, high frequency words are more likely to be used as response words than low frequency words. We propose a way of improving topic coherence score by restricting the training corpus to that of relevant information in the document in the context of job. We will be using the umass and cv coherence for two different LDA models: a 'good' and a 'bad' LDA model. A vector of topic coherence scores with length equal to the number of topics in the fitted model References. P(w | \text)$, how much a common word triggers a rarer word. The three prototypes for junk topics are the uniform word-distribution, the empirical corpus word-distribution, and the uniform document-distribution: Intrinsic methods that do not use any external source or task from the dataset, whereas extrinsic methods use the discovered topics for external tasks, such as information retrieval, or use external statistics to evaluate topics.Īs an early intrinsic method, define measures based on three prototypes of junk and insignificant topics. One can classify the methods addressing this problem into two categories.

This is by itself a hard task as human judgment is not clearly defined for example, two experts can disagree on the usefulness of a topic. Human judgment not being correlated to perplexity (or likelihood of unseen documents) is the motivation for more work trying to model the human judgment. Topic Coherence To Evaluate Topic Models

0 Comments

Leave a Reply. |

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed